The FluxNinja team is excited to launch “rate-limiting as a service” for developers. This is a start of a new category of essential developer tools to serve the needs of the AI-first world, which relies heavily on effective and fair usage of programmable web resources.

Try out FluxNinja Aperture for rate limiting. Join our community on Discord, appreciate your feedback.

FluxNinja is leading this new category of “managed rate-limiting service” with the first of its kind, reliable, and battle-tested product. After its first release in 2022, FluxNinja has gone through multiple iterations based on the feedback from the open source community and paid customers. We are excited to bring the stable version 1.0 of the service to the public.

The world needs a managed rate-limiting service

Whether you are self-hosting a service or using a managed-service, balancing the cost and performance remains a challenge. When hosting on your own, you are responsible for scaling to keep up with demand while keeping costs under control. When using a managed service, you have to comply with their request quotas while keeping usage and costs under control.

This is especially true for applications that use Large Language Models (LLMs). If using cloud-based LLMs, you have to comply with their rate-limits. If using self-hosted LLMs, you have to manage the infrastructure and ensure fair usage. And given the high cost of LLMs, and the shortage of resources such as GPUs, it is crucial to ensure fair usage and cost-efficiency.

To ensure fair usage and deliver a good user experience while being profitable, developers need to code and manage rate-limiting and caching infrastructure. It requires significant engineering efforts and expertise.

FluxNinja Aperture solves this challenge of building and managing production-grade rate-limiting by providing a managed-rate-limiting service to enforce and comply with rate-limits based on various criteria such as:

- Limits based on no. of requests per second

- Per-user limits based on consumed tokens

- Limits based on subscription plans

- Limits based on token-bucket algorithm

- Limits based on concurrency

FluxNinja utilizes a unique approach by separating rate-limiting infrastructure from the core application, which developers don’t need to code or manage anymore. They only need to integrate Aperture SDK, and then rate limiting policies can be updated via UI or API.

We aim to bring production-grade rate-limiting to every app

Overview of FluxNinja Aperture

With FluxNinja Aperture, application developers can enforce rate-limits on the usage of their services or comply with rate-limits of various external services. This ensures reliability of your services, fair usage and cost control.

FluxNinja Aperture provides a managed rate-limiting service that handles the complexities behind the scenes, requiring only simple SDK integration in your application.

These are the key features of FluxNinja Aperture rate-limiting service:

Rate & Concurrency Limiting

Optimize cost and ensure fair access by implementing fine-grained rate-limits. Regulate the use of expensive pay-as-you-go APIs such as OpenAI and reduce the load on self-hosted models such as Mistral.

Caching

Cache LLM results and reuse them for similar requests to reduce cost and boost performance.

Request Prioritization

Manage utilization of constrained LLM resources at the level of each request by prioritizing paid over free tier users and interactive over background queries. Ensure fair access across users during peak usage hours.

Workload observability

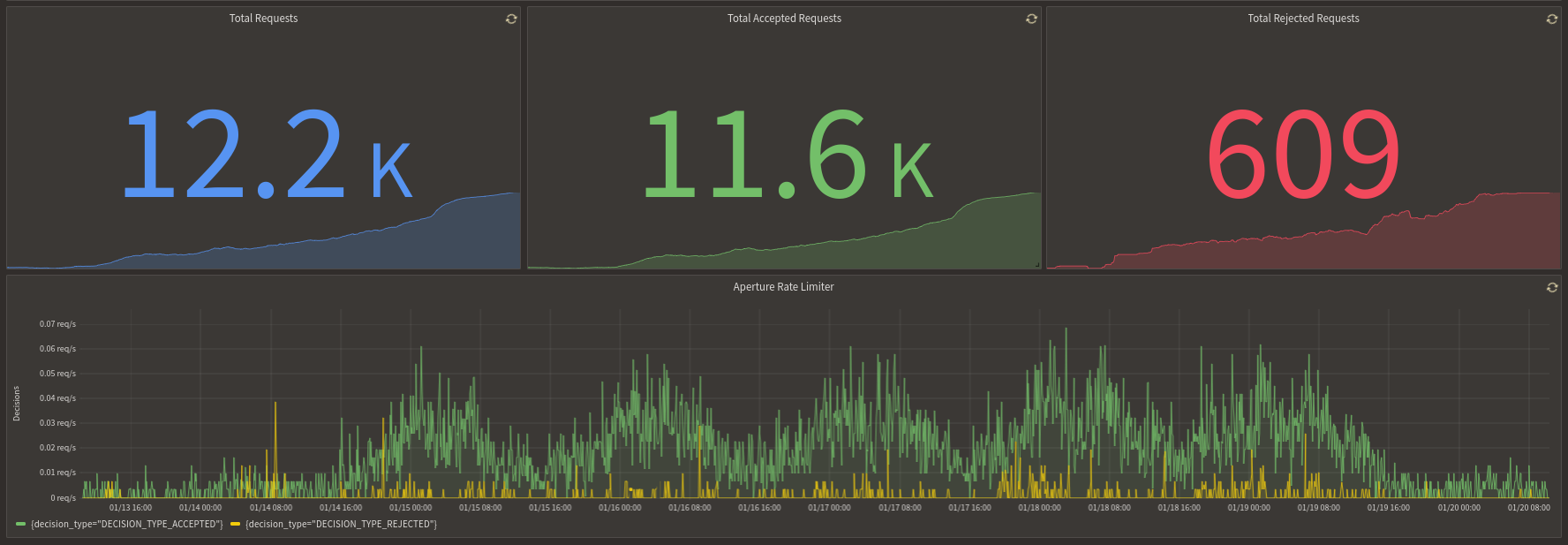

Get unprecedented visibility into your workloads with detailed traffic analytics on request rates, tokens, and latencies sliced by features, users, request types, and any other arbitrary business attribute.

For more info, check out FluxNinja Aperture Docs

Challenges with traditional rate-limiting solutions

Traditional approaches to rate-limiting, typically involving custom-built solutions with in-memory data stores such as Redis, have presented significant challenges.

Managing the codebase and infrastructure for rate-limiting demands regular attention from engineers and DevOps, incurring significant costs.

API gateways work for limited use cases; they lack the context-specific understanding required for business aware rate-limiting (e.g., per-user limits or subscription-based restrictions).

There is currently no ready-made solution where a distributed application needs to comply with rate-limits of an external service.

These limitations highlight the need for a more efficient, context-aware, and easy-to-manage rate-limiting solution suitable for modern application demands.

How FluxNinja Aperture solves these gaps

Aperture separates rate-limiting infrastructure from the application code. You can self-host it using the Aperture open source package or use the hosted solution - Aperture Cloud. To manage rate-limits, you only need to integrate Aperture SDKs in your programming language.

Benefits compared to custom Redis-based or makeshift solutions:

- No need to code and manage complex rate-limiting algorithms and infrastructure

- Rate-limit policies and algorithms are updated centrally via UI or API rather than application code changes

- Real-time analytics dashboards to monitor and tune configurations

With FluxNinja Aperture, the heavy lifting is offloaded, allowing you to focus on business logic while still retaining control over policies.

FluxNinja Aperture also integrates with existing service mesh and API gateways, giving a quick upgrade to your existing rate-limiting infrastructure.

You can easily configure these constraints using Aperture policies. And then wrap your code block with Aperture SDK calls where you use these external or internal services. Using the Aperture Cloud UI, you’ll be able to monitor the workload and effectiveness of rate-limit policies.

Check out this example to get started with enforcing or complying with rate-limits using FluxNinja Aperture.

Customer case study

CodeRabbit is a leading AI Code Review tool and they are an early adopter of FluxNinja Aperture. The CodeRabbit app consumes several LLM APIs. They offer code review services through various subscription tiers to their users, including a free trial and an unlimited plan for open source projects. The high cost of LLM services and huge demand for their own service made it a challenge to offer an accessible pricing for their users while being cost-efficient. CodeRabbit uses FluxNinja Aperture to prioritize, cache, and rate-limit requests based on user tier preference and time criticality. FluxNinja helps them deliver a great user experience while being cost-efficient.

Conclusion (tl;dr;)

Rate limiting is crucial for web services, especially for those using Generative AI, to ensure fair usage, cost-efficiency, and a better user experience. Traditional methods often require heavy engineering work and struggle to address more nuanced needs such as user-specific or token-based limits.

FluxNinja Aperture solves this by providing an SDK-driven managed-rate-limiting service, making it easy to enforce your own rate-limits and comply with rate limits of the services you use. With FluxNinja Aperture, teams do not need to invest their engineering bandwidth in building and maintaining complex rate limiting infrastructure. You can self-host FluxNinja Aperture on your premise or use the cloud offering at a nominal cost. It is as easy as integrating the FluxNinja SDK in your Node.js, Python, Golang, or Java backend apps.

FluxNinja team is excited to unveil this tool publicly for developers. Join us in this journey of bringing production-grade rate-limiting to every app.

Visit FluxNinja Aperture docs to get started with enforcing or complying with rate limits now.